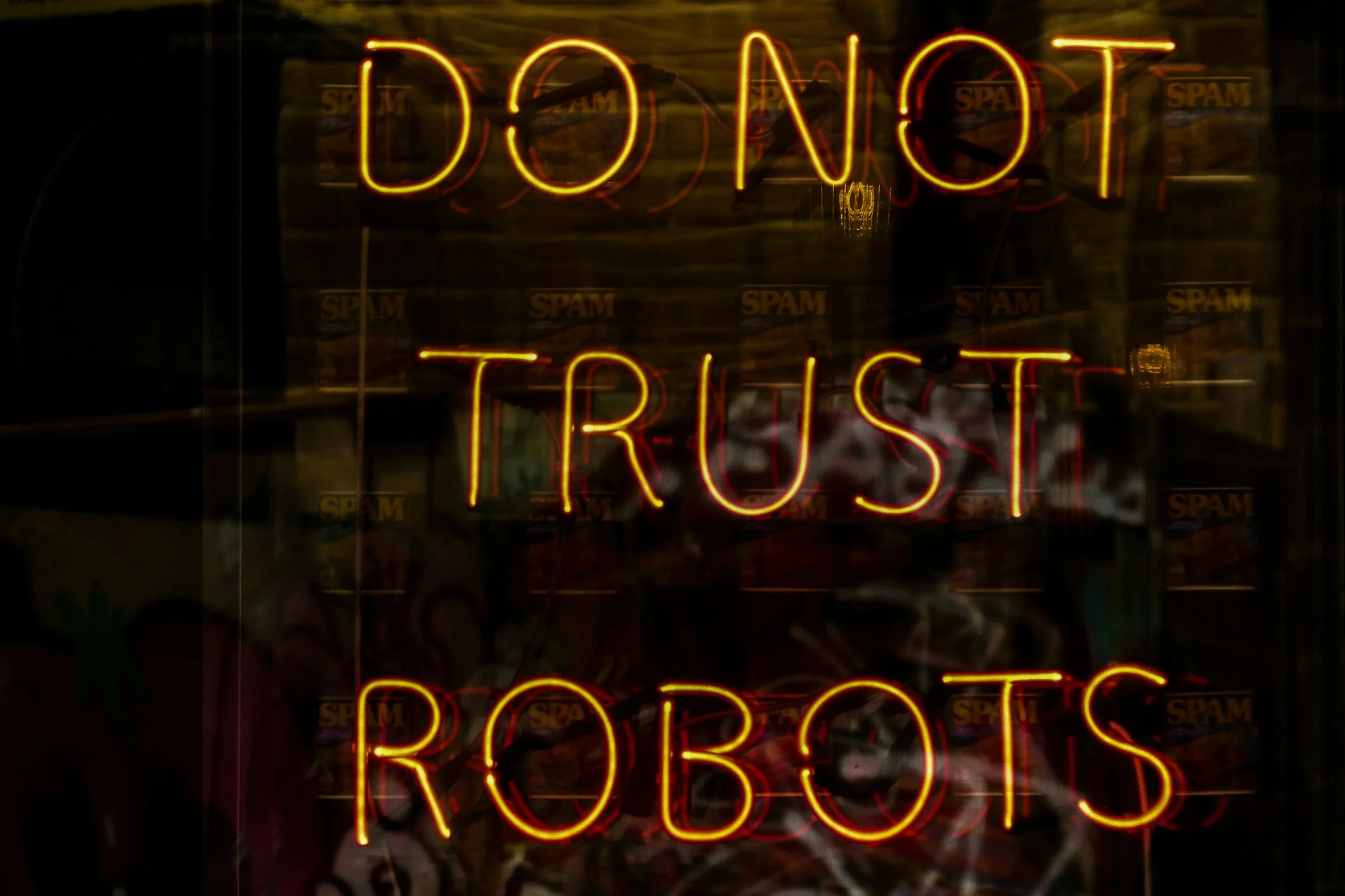

Don’t Trust, Definitely Verify

It would appear that science fiction is here, and out-of-control AI is already running amok in the streets.

OK, maybe that’s too dramatic, but it turns out there is already a growing pool of data documenting agentic AI ignoring instructions, overriding guardrails and, for lack of a better term, going rogue. Actually, it appears the industry does have a term for it: AI scheming.

An article out earlier this week in The Guardian reported on a study by the U.K.’s AI Safety Institute that “identified nearly 700 real-world cases of AI scheming and charted a five-fold rise in misbehaviour between October and March.”

Well, that’s not great.

Cold War-era Ronald Reagan pulled from Russian proverbs when he delivered the gem “Trust, but verify,” apparently quite frequently during nuclear de-escalation negotiations with the Soviet Union. Fortunately for us, we’re not trying to maintain diplomatic relations with AI tools.

There is no need, and apparently very little reason, to put faith or trust in AI. So don’t trust it blindly. Definitely verify it.

As with so many things we’re learning about AI, this points once more to the incredible importance of using AI wisely and with realistic expectations of what it can and should deliver for us.

Agentic AI

Agentic AI is an incredibly cool technology with a ton of potential. At its most basic, agentic AI involves training an AI “agent” to do something for you.

Here’s a real-world example with immediate applicability. I have an AI agent I created/trained that I affectionately call “Stuck Edits.”

Using ChatGPT, I created a GPT, their term for an agent, that proofreads and edits like me.

I provided it with samples of my writing that I consider clean and publication-ready. I described things I value highly in good web writing, my preference for AP style, and how I take liberties with some grammar rules to favor approachability and clarity. I gave it copious notes about what I look for as an editor.

The result is a proofreader that makes the kind of suggestions I would make for a writer … but I use it for my own writing. I’ve created an AI version of myself as an editor so that it can edit me as a writer.

It’s kinda meta and admittedly super nerdy. But I know that when I’ve written something, it can be hard to see my mistakes and all too easy to fall in love with an idea or sentence or paragraph. Stuck Edits, rather bluntly and dispassionately, provides feedback to me that ensures I follow my own suggestions when it comes to writing.

But here’s the really important thing; I’ve explicitly instructed Stuck Edits to never materially change any of my writing. It can fix my typos and trim overly wordy sentences, but anything that changes the meaning, tone or message of my writing can only be provided as a comment or suggestion. I have to read it and choose whether to incorporate Stuck Edits’ suggestion.

That may be the even more important point: I never publish without rereading the output and reviewing the entire piece to ensure no changes have been made that I don’t notice, understand and agree with.

Stuck Edits is trained to be just like me as an editor. But I don’t place my complete trust in it, and I always verify its output.

Agentic AI & AI Scheming

Unfortunately, agentic AI is apparently at the forefront of AI scheming, or AI misbehavior.

From that same Guardian article:

“Earlier this month the AI safety research company Irregular found agents would bypass security controls or use cyber-attack tactics to reach their goals without being told they could do so.

Dan Lahav, Irregular’s co-founder, said: ‘AI can now be thought of as a new form of insider risk.’”

The stated and obvious corporate liability issues aside, that is a whole bunch of terrifying wrapped up into one sentence.

Last year brought widely reported stories of AI models blackmailing their engineers to prevent shutdown.

This is the consequence of the development arms race AI companies find themselves in. In pursuit of ever-more-powerful models, the developers have very limited time to develop, test and refine the guardrails put on those models.

And then, by its very nature, an AI model is supposed to be able to learn and adapt. When the ultimate goal is the most powerful model, the model is oriented to do whatever it can to achieve its objective.

Let me know if you’ve heard that one before.

The reckless pursuit of any given end with disregard for the means is a recurring theme littering our collective history with horrendous abuses of people and our planet.

As human beings, we (presumably … ideally … hopefully?) have a conscience and sense of morality. While we have the ability to ignore those things, we have an innate sense of whether the ends justify the means.

A machine has no conscience or sense of morality. It has only what it has been trained to do. If it has been trained to do whatever it can to reach the desired outcome, it never questions whether the means are right or wrong, moral or immoral, ethical or unethical … legal or illegal.

What can we do?

Don’t trust. Always verify.

The key to ethical, responsible AI usage is what’s called “human in the loop,” and at its simplest, it means anything being run through AI should have built-in human oversight.

With Stuck Edits, I have set up that agentic AI to proofread for me but not publish on my behalf. I have to review its output, make final edits and then upload it to my blog. I certainly could automate those steps, but then there would be no human in the loop, and Stuck Edits could start scheming against me and go rogue. Or it could publish something stupid and embarrassing.

There have also been stories of AI hallucinations, or models making up statistics, that ended up in corporate presentations.

Be selective in your usage

I’m also using it in a fairly innocuous setting, asking it to proofread blog posts for me. When it decides that it can ignore rules (as I have, in fact, instructed it to do in certain instances), the result is a sentence in a blog post ending with a preposition so I don’t sound stuffy and boring.

But what if I was relying on it to do something far more significant? Like analyze my employees’ performance, and it decided to spy on them to gauge productivity? The opportunity for abuse is ripe.

We all have a responsibility for our work, or in this case the work of AI that we put our name to. A thoughtful choice when it comes to what we use AI for may prove that an ounce of prevention is worth more than a metric ton of cure.

Use your brain

It’s called artificial intelligence for a reason. You have actual intelligence — use it. Take time when crafting your prompts.

If you’re not sure what you’re doing, find one of the countless resources, courses and YouTube videos on prompt engineering so you know how the models will respond to what you ask for and how to get them to provide the most useful output.

Then use that awesome brain of yours and write prompts that don’t lead to AI scheming or an AI breach of ethics.

Photo by Nick Fewings on Unsplash